As it became clear already, the contents of the torrent can not be considered an archive, but rather the result of a distributed web harvesting effort.1 Many of the Torrent’s files are redundant or conflicting, because of uppercase/lowercase issues or different URLs pointing to the same file etc.

Before these files can be put together again and served via HTTP2, decisions need to be made which of these files should be served if a certain URL is requested. For most URLs this decision is easy — a request for

http://www.geocities.com/EnchantedForest/3151/index.html

should probably be answered with the corresponding file in the directory

www.geocities.com/EnchantedForest/3151/index.html.

However this file exists three times:

www.geocities.com/EnchantedForest/3151/index.html,geocities.com/EnchantedForest/3151/index.html(without the “www”) andYAHOOIDS/E/n/EnchantedForest/3151/index.html.

And all of them differ in contents!

While this particular case is easy — the difference in between these files is a tracking code inserted by Yahoo!, randomly generated for every visitor of the page –, in general each conflict has to be examined, especially when last modified dates are differing.

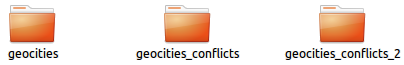

Many conflicts might be resolved automatically, however the first step was to identify conflicts and remove redundancies from the file system. This means actually changing the source material: the files contained in the Torrent and how they are organized. Ethically this is problematic, practically I do not see another way than reducing the amount of decisions to be made for a reconstruction.

To make the alteration more acceptable I publish all tools that I created for handling the torrent. They can be found on github.3 merge_directories.pl is the script that did most of the conflict identifying work. The directory with scripts created for handling the Torrent contains a list of scripts that should be run in order after downloading to have the data normalized. Running them all in a row took me 29 hours and 47 minutes.

The original Geocities Torrent contains 36’238’442 files. My scripts managed to identify 3’198’141 redundant files — quite impressive 8.85%. There are still 768’884 conflicts, 475 involving triple files. — And this is even before taking into account case-related redundancies or conflicts that I hope to solve with the help of a database.

Ingesting is next.

- See Tips for Torrenters, Cleaning Up The Torrent [↩]

- see Authenticity/Access [↩]

- The simple ones are unix shell scripts, most Linux distributions come with a rich set of tools to do file handling. More complex scripts are written in Perl. I hold the opinion that anybody involved in data preservation and digital archeology should be knowledgeable in Perl for mangling large amounts of data and interfacing to everything ever invented. [↩]